The Cone of Code Uncertainty

As hurricanes approach the shores of North America, we are barraged with maps of possible tracks those storms can take. Those tracks start pretty close together, but diverge widely the further into the future they predict. Simplified, those storm tracks form a cone of uncertainty. A storm in the Atlantic could slide into the Gulf of Mexico and land anywhere from the Texas-Mexico border to Panama City Beach, FL. It all depends on a myriad of tiny factors to turn the storm this way or that. As time progresses, the storm moves along, and the cone of uncertainty shrinks. Its eventual location becomes clearer to predict, and we can more effectively target resources.

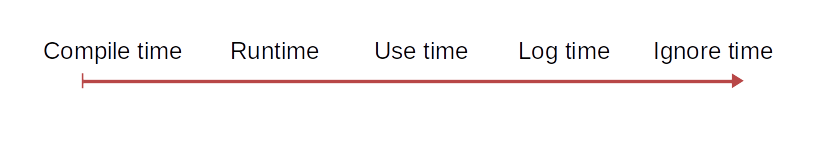

Much like hurricanes, running code lives in a cone of uncertainty. A function or method call is a mysterious black box until we have a result. If something goes wrong, we must delve into this cone of code uncertainty to find the problem. We use log statements and debuggers and a whole lot of time. We parse through to find where the code diverges from the assumptions in our mental model. Eventually we find the problem, fix it, and breathe a sigh of relief.

Sometimes we only need to dig in a little bit, find the problem, and fix it. Other times, we have to dig down into very large functions with lots of nested calls and complex conditional logic. The latter have a much larger cone of uncertainty. Until we get all the way down, we don't really know where the problem could be. Bugs are especially hard to find when they manifest in a generic block of code, but the real problem is somewhere three layers up from that.

Often it seems like this is just how software development is. But it doesn't have to be. If we understand that we have this cone of uncertainty, we can focus on shrinking it down as much as we can.

How can we reduce the uncertainty in our code? This uncertainty starts when we call a function or method in our code. That function is a black box to the caller. It can't know what is happening until a result or an error is returned. That function can call other functions, call out to external resources, and just be generally complicated. We can reduce the uncertainty by shrinking the amount of code in a function, reducing the call depth within that function, and enforcing strong invariants around that function.

To accomplish these characteristics is straightforward, though occasionally tedious. First, if there is a lot of code in a function or method, decompose that function into smaller functions. This doesn't reduce the amount of code, but it does clarify and isolate the chunks of logic within it. Once the function has been decomposed, we can look at whether each sub-call should actually be done in that method, or whether we can pull that code out to the caller and reduce the call depth. Separating function calls to run consecutively creates boundaries. We can make clearer assertions on the intermediate values, enforcing stronger invariants on and between the calls. Once we have these smaller, more understandable chunks of code, we can validate our assumptions around them, fixing the inconsistencies that lead to bugs.

Comments

Post a Comment